|

Devising software routines that could track the finger movements and convert them to instructions for what should be happening with images on the screen was tougher. Sensing the exact location of fingers was one challenge. The screen could therefore serve as both an output of imagery and an input of touches made on that imagery. Han soon discovered that the acrylic panel could also serve as a diffusion screen a projector behind the panel, linked to a computer, could beam images toward it, and they would diffuse through to the other side. The cameras can track this leakage from many points at once. Cameras behind the screen sense this leaking light, or FTIR, revealing the location being touched. But when someone places a finger on one face of the sheet, some of the internally reflecting light beams hit it and scatter off, bouncing through the sheet and out the opposite face. The light streams through, reflecting internally off the sheet walls, much as light flows though an optical fiber. Light-emitting diodes (LEDs) around the edges pump infrared light into the sheet. Han ultimately devised a rectangular sheet of clear acrylic that acts like a waveguide, essentially a pipe for light waves. But tracking a randomly moving finger would have required an insane amount of wiring behind the screen, which also would have limited the screen’s functionality. He first considered building a very high resolution version of the single-touch screens used in automated teller machines and kiosks, which typically sense the electrical capacitance of a finger touching predefined points on the screen. Thus began his six-year absorption with multi-touch interfaces. He imagined that an electronic system could optically track fingertips placed on the face of a clear computer monitor. He noticed how crisply his fingerprint on the outside of the glass appeared when viewed through the water at a steep angle. Han, who describes himself as “a very tactile person,” became aware of the effect one day when he was looking through a full glass of water. Perhaps most fundamental was exploiting an optical effect known as frustrated total internal reflection (FTIR), which is also used in fingerprint-recognition equipment. The solution required both hardware and software innovations. But around 2000, at N.Y.U., Han began a journey to overcome one of the technology’s toughest hurdles: achieving fine-resolution fingertip sensing. Rudimentary work on multi-touch interfaces dates to the early 1980s, according to Bill Buxton, a principal researcher at Microsoft Research. Looking ahead, Han expects the technology to find a home in graphically intense businesses such as energy trading and medical imaging. states to depict voting results, the anchors, standing in front of the screen, dramatically zoomed in and out of states, even counties, simply by moving their fingers across the map. News anchors on CNN used a big Perceptive Pixel system during coverage of the presidential primaries that boldly displayed all 50 U.S. Several early adopters have purchased complete systems, including intelligence agencies that need to quickly compare geographically coordinated surveillance images in their war rooms. When Han wants the display to access different files he taps it twice, bringing up charts or menus that can also be tapped. As many as 10 or more video feeds can run simultaneously, and there is no toolbar in sight.

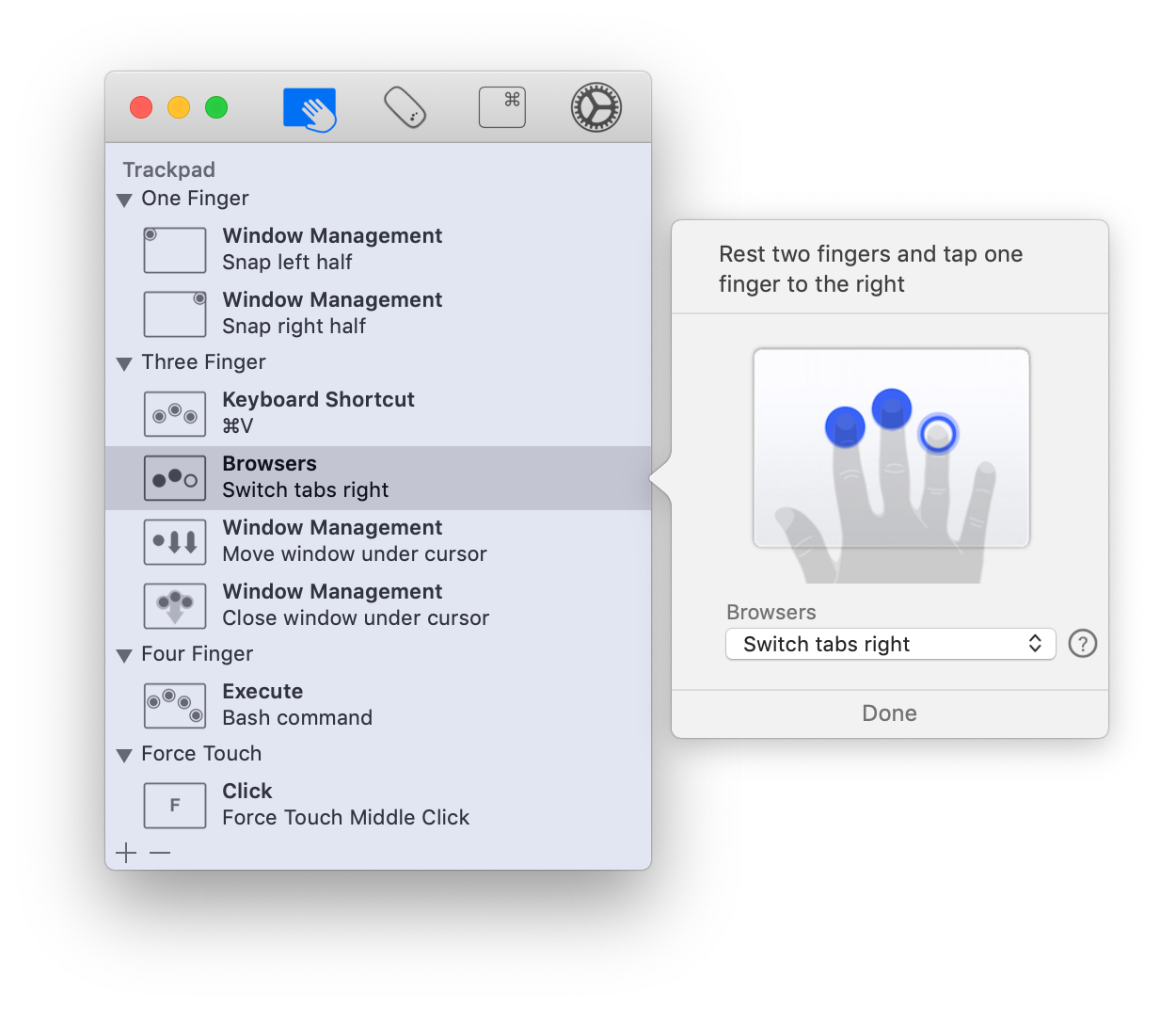

Han steps up to the electronic wall and unleashes a world of images using nothing but the touch of his fingers. Walking into his company’s lobby, one is greeted by a three-by-eight-foot flat screen. Jeff Han, a consulting computer scientist at New York University and founder of Perceptive Pixel in New York City, is at the forefront of multi-touch technology. Yet the technology is already being applied in more far-flung situations in which anyone without any training can reach out during a brainstorming session and move or mark up objects and plans. It is easy to imagine how photographers, graphic designers or architects-professionals who must manipulate lots of visual material and who often work in teams-would welcome this multi-touch computing. Engineers have developed much larger screens that respond to 10 fingers at once, even to multiple hands from multiple people. But in laboratories around the world at the time of the iPhone’s launch, multi-touch screens had vastly outgrown two-finger commands. The operations felt intuitive, even sensuous. The tactile pleasure the interface provides beyond its utility quickly brought it accolades. Images on the screen can be moved around with a fingertip and made bigger or smaller by placing two fingertips on the image’s edges and then either spreading those fingers apart or bringing them closer together. When Apple’s iPhone hit the streets last year, it introduced so-called multi-touch screens to the general public.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed